In this example I'm going to be using the 7 features: Pclass, Sex, Age, SibSp, Parch, Fare, Embarked I'm not going to dwell on the details of this dataset, so if you're interested in learning more about it I would highly recommend looking over kernels within the competition.ĭf.loc.isnull(),'Age'] = np.round(df.mean())ĭf.loc.isnull(),'Embarked'] = df.value_counts().index For simplicity I will just be replacing any missing values with the features avarage or mode. This will require some preprocessing steps before training a model if there's any missing data. Something important to note is that random forests don't handle missing values. The objective is to predict whether a passenger survived the titanic crash or not, where 1 denotes that the passenger survived and 0 denotes that they perished. The datast consists of simple attributes for each passenger like their age, sex, social class, # of family members, and where they embarked. For classification the terminal nodes output the class that is the mode while in the context of regression they'll output the mean prediction. We'll repeat this process until we reach one of the bottom nodes, also known as the terminal nodes.

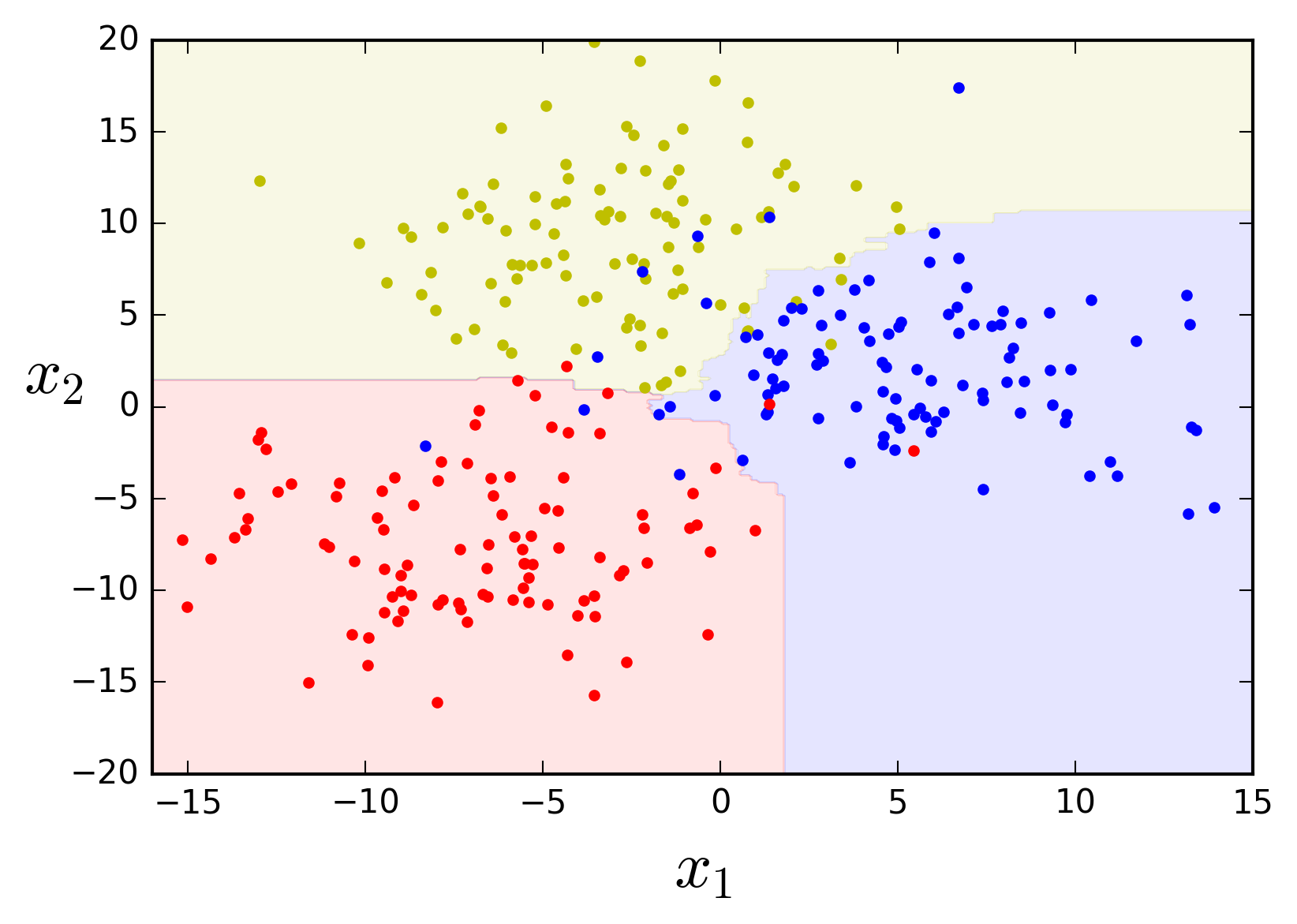

If the answer to the question is correct we'll move to the left node directly connected below, which we'll call the left child, otherwise, if the answer is wrong we'll move down to the right node, which we'll call the right child. We start from very top, which we'll call the root node, and ask a simple question. We'll define what what it means to be the "best split" in a bit.Ī quick overview if you're not familiar with binary decision trees. In other words, to build a tree all we're really doing is selecting a hand full of observations from out dataset, picking a few features to look through, and finding the value that makes the best split in our data. require a lot of matrix based operations, while tree based models like random forest are constructed with basic arithmetic.

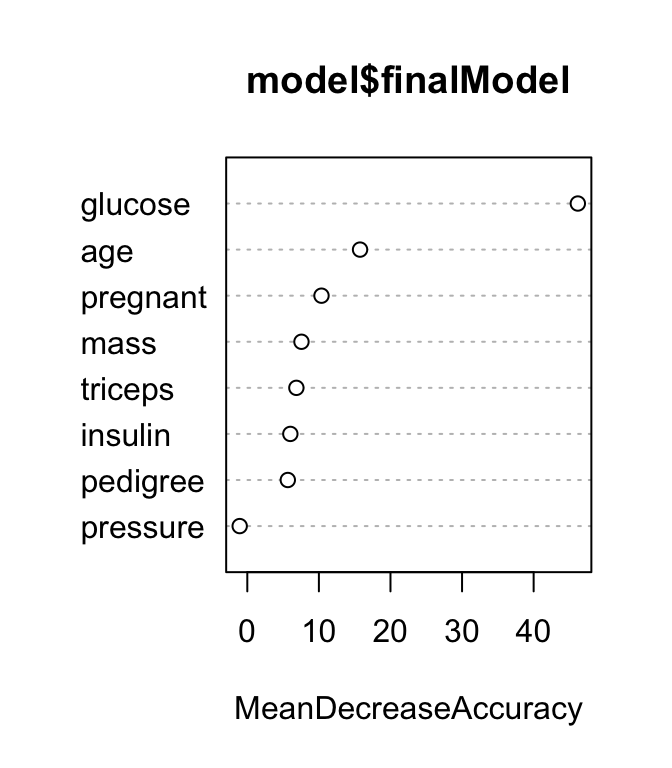

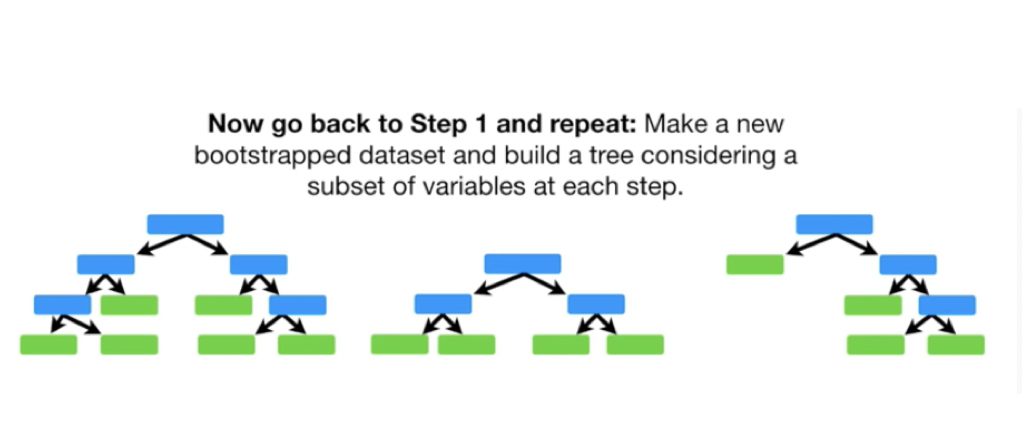

Models like linear regression, support vector machines, neural networks, etc. They differ from many common machine learning models used today that are typically optimized using gradient descent. Random forests are also non-parametric and require little to no parameter tuning. While an individual tree is typically noisey and subject to high variance, random forests average many different trees, which in turn reduces the variability and leave us with a powerful classifier. Random forests are essentially a collection of decision trees that are each fit on a subsample of the data. Random forests are known as ensemble learning methods used for classification and regression, but in this particular case I'll be focusing on classification.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed